AI-RAN Explained: Why NVIDIA Is Rebuilding the World’s Wireless Networks

On March 1, 2026, at Mobile World Congress in Barcelona, NVIDIA’s Jensen Huang announced something that got less attention than it deserved: a coalition of 12 major companies including BT Group, Deutsche Telekom, T-Mobile, Nokia, Ericsson, SoftBank, and Cisco committed to building the world’s next generation of wireless networks on AI-native, open, software-defined platforms.

This is not a product launch. It is a fundamental architectural shift in how mobile networks are built — and it has implications for every device you own.

What Is AI-RAN, and Why Does the Old Architecture Fall Short?

RAN stands for Radio Access Network the part of a mobile network that connects your phone to cell towers. For decades, this infrastructure has been built on purpose-specific hardware: specialized chips for specific functions, updated by swapping physical equipment. It was expensive, slow to upgrade, and increasingly mismatched with what modern networks need to do.

AI-RAN changes the architecture entirely. Instead of purpose-built hardware, it runs the radio access network on GPU-accelerated, software-defined infrastructure the same kind of platforms that power AI workloads. The key insight: if your network runs on a common compute platform, you can update it with software rather than hardware, run AI and RAN workloads simultaneously on the same infrastructure, and add new capabilities continuously.

NVIDIA’s AI Aerial platform is the foundation. It provides a unified reference architecture for 5G and 6G networks including Open-RAN, Centralized-RAN, Distributed-RAN, and Cloud-RAN all on the same GPU-accelerated compute platform.

The Performance Numbers Are Significant

This isn’t theoretical. ODC (Open RAN Development Company), which integrated its AI-RAN software with NVIDIA’s platform, reported substantial gains over traditional CPU-based virtual RAN architectures in a demonstration at NVIDIA’s GTC DC event. The company claimed 40x faster physical layer signal processing, a 7x increase in cell capacity, and 3.5x higher power efficiency per cell. The AI-RAN Alliance now counts over 130 participating companies driving this shift.

NVIDIA also invested $1 billion in Nokia, which is porting its RAN software including the most processor-intensive Layer 1 onto NVIDIA’s GPU platform. T-Mobile confirmed it will begin trials of a Nokia/NVIDIA AI-RAN design in 2026.

Three Ways AI Gets Embedded in the Network

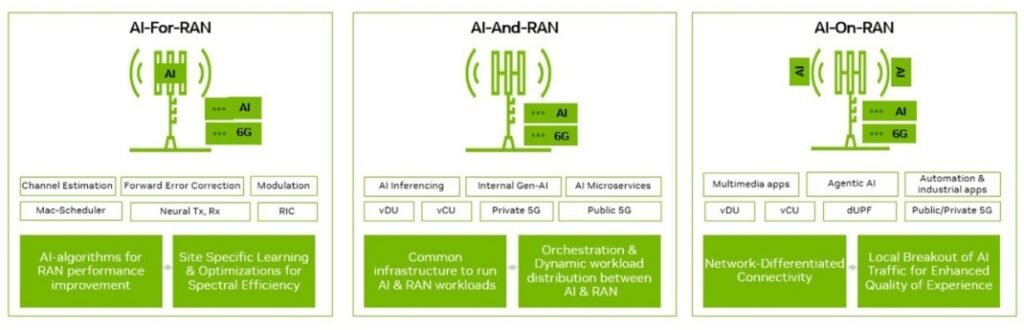

Understanding AI-RAN requires distinguishing three different roles AI plays:

AI-for-RAN uses AI to optimize how the radio network operates things like spectrum allocation, beam management, and interference mitigation. A MITRE-developed application demonstrated at GTC can manage wireless spectrum allocation within a cell site in real time, targeting and blocking only interfering frequencies without interrupting service to other users.

AI-on-RAN runs AI applications at the network edge on the same infrastructure as the RAN itself. This enables ultra-low-latency AI inference for applications like autonomous vehicles, industrial robotics, and real-time AR/VR that can’t afford the round-trip time to a distant cloud data center.

AI-native RAN is the end state: a radio access network where AI is embedded in the signal processing itself, enabling a new class of capabilities not possible with traditional approaches.

Why 5G to 6G Becomes a Software Upgrade

One of the most consequential aspects of AI-RAN is the upgrade path it creates. With the current hardware-defined architecture, moving from 4G to 5G required replacing physical equipment at thousands of cell sites a years-long, hundreds-of-billions process. AI-RAN, built on software-defined, GPU-accelerated infrastructure, is designed so that operators can move from 5G to 6G through software upgrades alone.

NVIDIA’s Aerial RAN Computer (ARC) Pro is built specifically for this: a single platform that runs 5G, 6G, and AI workloads together at existing cell sites. The goal is that operators don’t rip and replace they update, like pushing a software patch.

The Privacy and Control Trade-Off

AI-native networks introduce new questions about who has visibility into network behavior. When AI manages spectrum, interference, and routing in real time, the same infrastructure that optimizes your call quality also has granular visibility into network usage patterns. This is a legitimate policy concern that the industry hasn’t fully resolved.

Supporters argue that AI-RAN’s open, software-defined architecture is more auditable than the black-box hardware it replaces. Critics note that concentrating network intelligence on a handful of platforms — NVIDIA’s GPU infrastructure chief among them creates new dependency risks and potential single points of failure.

What This Means for You

The immediate impact is invisible better network performance, more efficient spectrum use, fewer dropped connections in dense environments. The medium-term impact is more interesting: genuinely low-latency edge AI inference means applications that currently require a powerful device processor could run on modest hardware by offloading computation to the network edge.

6G, when it arrives target timeframe is the early 2030s will be built on AI-RAN foundations that are being laid right now. The decisions being made at Mobile World Congress 2026 about architecture, openness, and vendor ecosystems will shape connectivity for the next two decades.

NVIDIA’s move into telecom infrastructure is also strategically significant. The company has dominated AI training and inference in data centers. AI-RAN extends that reach to the physical network layer making NVIDIA’s GPU architecture potentially as foundational to wireless connectivity as it already is to cloud computing.

Pingback: AI Infrastructure in 2026: The $700B Arms Race

Pingback: NVIDIA RTX 5090 vs RTX 4090: Gaming Performance Showdown - Limited Time | Tech News, Reviews & Buying Guides